Overview of Web Security Policies

Filed under: Development, Security, Testing

A vulnerability was just identified in your website. How would you know?

The process of vulnerability disclosure to an organization is often very difficult to identify. Whether you are offering any type of bounty for security bugs or not, it is important that there is a clear path for someone to notify you of a potential concern.

Unfortunately, the process is different on every application and it can be very difficult to find it. For someone that is just trying to help out, it can be very frustrating as well. Some websites may have a separate security page with contact information. Other sites may just have a security email address on the contact us page. Many sites don’t have any clear indication of how to report such a finding. Maybe we could just use the security@ email address for the organization, but do they have it configured?

In an effort to help standardize how to find this information, there is a draft definition for a method for web security policies. You can read the draft at https://tools.ietf.org/html/draft-foudil-securitytxt-03. The goal of this is to specify a text file in a known path to provide contact information for users to submit potential security concerns.

How it works

The first step is to create a security.txt file to describe your web security policy. This file should be found in the .well-known directory (according to the specifications). This would make your text file found at /.well-known/security.txt. In some circumstances, it may also be found at just /security.txt.

The purpose of pinning down the name of the file and where it should be located is to limit the searching process. If someone finds an issue, they know where to go to find the right contact information or process.

The next step is to put the relevant information into the security.txt file. The draft documentation covers this in depth, but I want to give a quick example of what this may look like:

Security.txt

— Start of File —

# This is a sample security.txt file contact: mailto:james@developsec.com contact: tel:+1-904-638-5431 # Encryption - This links to my public PGP Key Encryption: https://www.jardinesoftware.com/jamesjardine-public.txt # Policy - Links to a policy page outlining what you are looking for Policy: https://www.jardinesoftware.com/security-policy # Acknowledgments - If you have a page that acknowledges users that have submitted a valid bug Acknowledgments: https://www.jardinesoftware.com/acknowledgments # Hiring - if you offer security related jobs, put the link to that page here Hiring: https://www.jardinesoftwarre.com/jobs # Signature - To help secure your file, create a signature file and reference it here. Signature: https://www.jardinesoftware.com/.well-known/security.txt.sig

—- End of File —

I included some comments in that sample above to show what each item is for. A key point is that very little policy information is actually included in the file, rather it is linked as a reference. For example, the PGP key is not actually embedded in the file, but instead the link to the key is referenced.

The goal of the file is to be in a well defined location and provide references to your different security policies and procedures.

WHAT DO YOU THINK?

So I am curious, what do you think about this technique? While it is still in draft status, it is an interesting concept. It allows providing a known path for organizations to follow to provide this type of information.

I don’t believe it is a requirement to create bug bounty programs, or even promote the security testing of your site without permission. However, it does at least provide a means to share your requests and provide information to someone that does find a flaw and wants to share that information with you.

Will we see this move forward, or do you think it will not catch on? If it is a good idea, what is the best way to raise the awareness of it?

Security Tips for Copy/Paste of Code From the Internet

Filed under: Development, Security

Developing applications has long involved using code snippets found through textbooks or on the Internet. Rather than re-invent the wheel, it makes sense to identify existing code that helps solve a problem. It may also help speed up the development time.

Years ago, maybe 12, I remember a co-worker that had a SQL Injection vulnerability in his application. The culprit, code copied from someone else. At the time, I explained that once you copy code into your application it is now your responsibility.

Here, 12 years later, I still see this type of occurrence. Using code snippets directly from the web in the application. In many of these cases there may be some form of security weakness. How often do we, as developers, really analyze and understand all the details of the code that we copy?

Here are a few tips when working with external code brought into your application.

Understand what it does

If you were looking for code snippets, you should have a good idea of what the code will do. Better yet, you probably have an understanding of what you think that code will do. How vigorously do you inspect it to make sure that is all it does. Maybe the code performs the specific task you were set out to complete, but what happens if there are other functions you weren’t even looking for. This may not be as much a concern with very small snippets. However, with larger sections of code, it could coverup other functionality. This doesn’t mean that the functionality is intentionally malicious. But undocumented, unintended functionality may open up risk to the application.

Change any passwords or secrets

Depending on the code that you are searching, there may be secrets within it. For example, encryption routines are common for being grabbed off the Internet. To be complete, they contain hard-coded IVs and keys. These should be changed when imported into your projects to something unique. This could also be the case for code that has passwords or other hard-coded values that may provide access to the system.

As I was writing this, I noticed a post about the RadAsyncUpload control regarding the defaults within it. While this is not code copy/pasted from the Internet, it highlights the need to understand the default configurations and that some values should be changed to help provide better protections.

Look for potential vulnerabilities

In addition to the above concerns, the code may have vulnerabilities in it. Imagine a snippet of code used to select data from a SQL database. What if that code passed your tests of accurately pulling the queries, but uses inline SQL and is vulnerable to SQL Injection. The same could happen for code vulnerable to Cross-Site Scripting or not checking proper authorization.

We have to do a better job of performing code reviews on these external snippets, just as we should be doing it on our custom written internal code. Finding snippets of code that perform our needed functionality can be a huge benefit, but we can’t just assume it is production ready. If you are using this type of code, take the time to understand it and review it for potential issues. Don’t stop at just verifying the functionality. Take steps to vet the code just as you would any other code within your application.

XXE and .Net

XXE, or XML External Entity, is an attack against applications that parse XML. It occurs when XML input contains a reference to an external entity that it wasn’t expected to have access to. Through this article, I will discuss how .Net handles XML for certain objects and how to properly configure these objects to block XXE attacks. It is important to understand that the different versions of the .Net framework handle this differently. I will point out the differences for each object.

I will cover the XmlReader, XmlTextReader, and XMLDocument. Here is a quick summary regarding the default settings:

| Object | Safe by Default? |

|---|---|

| XmlReader | |

| Prior to 4.0 | Yes |

| 4.0 + | Yes |

| XmlTextReader | |

| Prior to 4.0 | No |

| 4.0 + | No |

| XmlDocument | |

| 4.5 and Earlier | No |

| 4.6 | Yes |

XMLReader

Prior to 4.0

The ProhibitDtd property is used to determine if a DTD will be parsed.

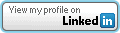

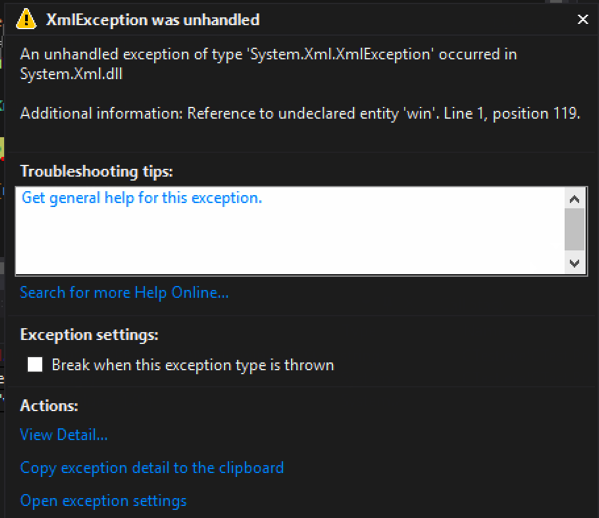

- True (default) – throws an exception if a DTD is identified. (See Figure 1)

- False – Allows parsing the DTD. (Potentially Vulnerable)

Code that throws an exception when a DTD is processed: – By default, ProhibitDtd is set to true and will throw an exception when an Entity is referenced.

static void Reader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlReader myReader = XmlReader.Create(new StringReader(xml));

while (myReader.Read())

{

Console.WriteLine(myReader.Value);

}

Console.ReadLine();

}

Exception when executed:

[Figure 1]

Code that allows a DTD to be processed: – Using the XmlReaderSettings object, it is possible to allow the parsing of the entity. This could make your application vulnerable to XXE.

static void Reader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlReaderSettings rs = new XmlReaderSettings();

rs.ProhibitDtd = false;

XmlReader myReader = XmlReader.Create(new StringReader(xml),rs);

while (myReader.Read())

{

Console.WriteLine(myReader.Value);

}

Console.ReadLine();

}

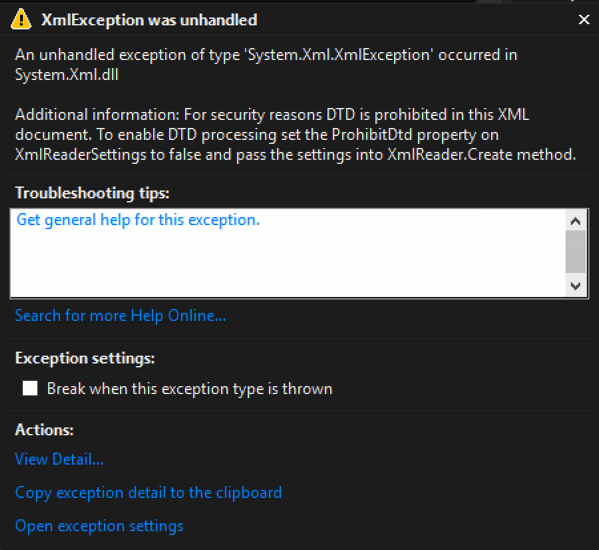

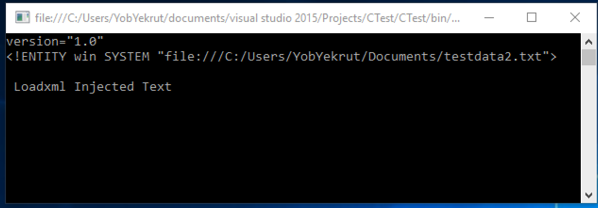

Output when executed showing injected text:

[Figure 2]

.Net 4.0+

In .Net 4.0, they made a change from using the ProhibitDtD property to the new DtdProcessing enumeration. There are now three (3) options:

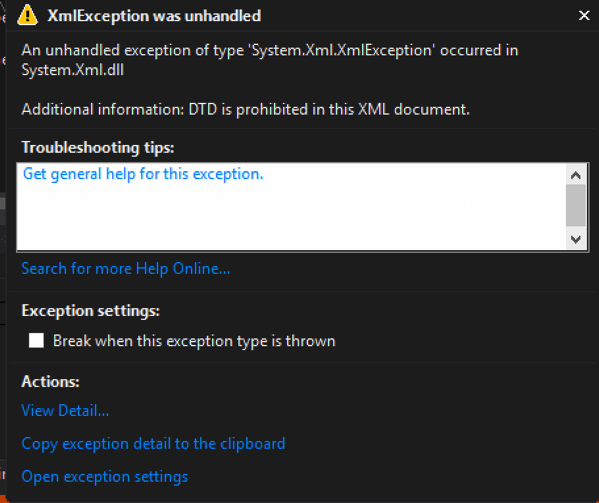

- Prohibit (default) – Throws an exception if a DTD is identified.

- Ignore – Ignores any DTD specifications in the document, skipping over them and continues processing the document.

- Parse – Will parse any DTD specifications in the document. (Potentially Vulnerable)

Code that throws an exception when a DTD is processed: – By default, the DtdProcessing is set to Prohibit, blocking any external entities and creating safe code.

static void Reader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlReader myReader = XmlReader.Create(new StringReader(xml));

while (myReader.Read())

{

Console.WriteLine(myReader.Value);

}

Console.ReadLine();

}

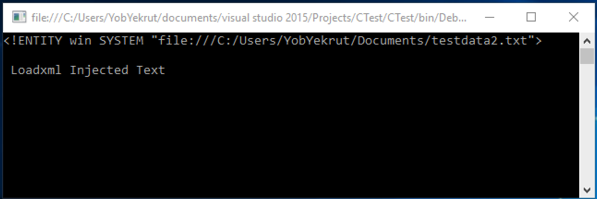

Exception when executed:

[Figure 3]

Code that ignores DTDs and continues processing: – Using the XmlReaderSettings object, setting DtdProcessing to Ignore will skip processing any entities. In this case, it threw an exception because there was a reference to the entirety that was skipped.

static void Reader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlReaderSettings rs = new XmlReaderSettings();

rs.DtdProcessing = DtdProcessing.Ignore;

XmlReader myReader = XmlReader.Create(new StringReader(xml),rs);

while (myReader.Read())

{

Console.WriteLine(myReader.Value);

}

Console.ReadLine();

}

Output when executed ignoring the DTD (Exception due to trying to use the unprocessed entity):

[Figure 4]

Code that allows a DTD to be processed: Using the XmlReaderSettings object, setting DtdProcessing to Parse will allow processing the entities. This potentially makes your code vulnerable.

static void Reader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlReaderSettings rs = new XmlReaderSettings();

rs.DtdProcessing = DtdProcessing.Parse;

XmlReader myReader = XmlReader.Create(new StringReader(xml),rs);

while (myReader.Read())

{

Console.WriteLine(myReader.Value);

}

Console.ReadLine();

}

Output when executed showing injected text:

[Figure 5]

XmlTextReader

The XmlTextReader uses the same properties as the XmlReader object, however there is one big difference. The XmlTextReader defaults to parsing XML Entities so you need to explicitly tell it not too.

Prior to 4.0

The ProhibitDtd property is used to determine if a DTD will be parsed.

- True – throws an exception if a DTD is identified. (See Figure 1)

- False (Default) – Allows parsing the DTD. (Potentially Vulnerable)

Code that allows a Dtd to be processed: (Potentially Vulnerable) – By default, the XMLTextReader sets the ProhibitDtd property to False, allowing entities to be parsed and the code to potentially be vulnerable.

static void TextReader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlTextReader myReader = new XmlTextReader(new StringReader(xml));

while (myReader.Read())

{

if (myReader.NodeType == XmlNodeType.Element)

{

Console.WriteLine(myReader.ReadElementContentAsString());

}

}

Console.ReadLine();

}

Code that blocks the Dtd from being parsed and throws an exception: – Setting the ProhibitDtd property to true (explicitly) will block Dtds from being processed making the code safe from XXE. Notice how the XmlTextReader has the ProhibitDtd property directly, it doesn’t have to use the XmlReaderSettings object.

static void TextReader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlTextReader myReader = new XmlTextReader(new StringReader(xml));

myReader.ProhibitDtd = true;

while (myReader.Read())

{

if (myReader.NodeType == XmlNodeType.Element)

{

Console.WriteLine(myReader.ReadElementContentAsString());

}

}

Console.ReadLine();

}

4.0+

In .Net 4.0, they made a change from using the ProhibitDtD property to the new DtdProcessing enumeration. There are now three (3) options:

- Prohibit – Throws an exception if a DTD is identified.

- Ignore – Ignores any DTD specifications in the document, skipping over them and continues processing the document.

- Parse (Default) – Will parse any DTD specifications in the document. (Potentially Vulnerable)

Code that allows a DTD to be processed: (Vulnerable) – By default, the XMLTextReader sets the DtdProcessing to Parse, making the code potentially vulnerable to XXE.

static void TextReader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlTextReader myReader = new XmlTextReader(new StringReader(xml));

while (myReader.Read())

{

if (myReader.NodeType == XmlNodeType.Element)

{

Console.WriteLine(myReader.ReadElementContentAsString());

}

}

Console.ReadLine();

}

Code that blocks the Dtd from being parsed: – To block entities from being parsed, you must explicitly set the DtdProcessing property to Prohibit or Ignore. Note that this is set directly on the XmlTextReader and not through the XmlReaderSettings object.

static void TextReader()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlTextReader myReader = new XmlTextReader(new StringReader(xml));

myReader.DtdProcessing = DtdProcessing.Prohibit;

while (myReader.Read())

{

if (myReader.NodeType == XmlNodeType.Element)

{

Console.WriteLine(myReader.ReadElementContentAsString());

}

}

Console.ReadLine();

}

Output when Dtd is prohibited:

[Figure 6]

XMLDocument

For the XMLDocument, you need to change the default XMLResolver object to prohibit a Dtd from being parsed.

.Net 4.5 and Earlier

By default, the XMLDocument sets the URLResolver which will parse Dtds included in the XML document. To prohibit this, set the XmlResolver = null.

Code that does not set the XmlResolver properly (potentially vulnerable) – The default XMLResolver will parse entities, making the following code potentially vulnerable.

static void Load()

{

string fileName = @"C:\Users\user\Documents\test.xml";

XmlDocument xmlDoc = new XmlDocument();

xmlDoc.Load(fileName);

Console.WriteLine(xmlDoc.InnerText);

Console.ReadLine();

}

Code that does set the XmlResolver to null, blocking any Dtds from executing: – To block entities from being parsed, you must explicitly set the XmlResolver to null. This example uses LoadXml instead of Load, but they both work the same in this case.

static void LoadXML()

{

string xml = "<?xml version=\"1.0\" ?><!DOCTYPE doc

[<!ENTITY win SYSTEM \"file:///C:/Users/user/Documents/testdata2.txt\">]

><doc>&win;</doc>";

XmlDocument xmlDoc = new XmlDocument();

xmlDoc.XmlResolver = null;

xmlDoc.LoadXml(xml);

Console.WriteLine(xmlDoc.InnerText);

Console.ReadLine();

}

.Net 4.6

It appears that in .Net 4.6, the XMLResolver is defaulted to Null, making the XmlDocument safe. However, you can still set the XmlResolver in a similar way as prior to 4.6 (see previous code snippet).

Open Redirect – Bad Implementation

I was recently looking through some code and happen to stumble across some logic that is attempting to prohibit the application from redirecting to an external site. While this sounds like a pretty simple task, it is common to see it incorrectly implemented. Lets look at the check that is being performed.

string url = Request.QueryString["returnUrl"];

if (string.IsNullOrWhiteSpace(url) || !url.StartsWith("/"))

{

Response.Redirect("~/default.aspx");

}

else

{

Response.Redirect(url);

}

The first thing I noticed was the line that checks to see if the url starts with a “/” characters. This is a common mistake when developers try to stop open redirection. The assumption is that to redirect to an external site one would need the protocol. For example, http://www.developsec.com. By forcing the url to start with the “/” character it is impossible to get the “http:” in there. Unfortunately, it is also possible to use //www.developsec.com as the url and it will also be interpreted as an absolute url. In the example above, by passing in returnUrl=//www.developsec.com the code will see the starting “/” character and allow the redirect. The browser would interpret the “//” as absolute and navigate to www.developsec.com.

After putting a quick test case together, I quickly proved out the point and was successful in bypassing this logic to enable a redirect to external sites.

Checking for Absolute or Relative Paths

ASP.Net has build in procedures for determining if a path is relative or absolute. The following code shows one way of doing this.

string url = Request.QueryString["returnUrl"];

Uri result;

bool isAbsolute = false;

isAbsolute = Uri.TryCreate(returnUrl, UriKind.Absolute, out result);

if (!isAbsolute)

{

Response.Redirect(url);

}

else

{

Response.Redirect("~/default.aspx");

}

In the above example, if the URL is absolute (starts with a protocol, http/https, or starts with “//”) it will just redirect to the default page. If the url is not absolute, but relative, it will redirect to the url passed in.

While doing some research I came across a recommendation to use the following:

if (Uri.IsWellFormedUriString(returnUrl,UriKind.Relative))

When using the above logic, it flagged //www.developsec.com as a relative path which would not be what we are looking for. The previous logic correctly identified this as an absolute url. There may be other methods of doing this and MVC provides some other functions as well that we will cover in a different post.

Conclusion

Make sure that you have a solid understanding of the problem and the different ways it works. It is easy to overlook some of these different techniques. There is a lot to learn, and we should be learning every day.

.Net EnableHeaderChecking

How often do you take untrusted input and insert it into response headers? This could be in a custom header or in the value of a cookie. Untrusted user data is always a concern when it comes to the security side of application development and response headers are no exception. This is referred to as Response Splitting or HTTP Header Injection.

Like Cross Site Scripting (XSS), HTTP Header Injection is an attack that results from untrusted data being used in a response. In this case, it is in a response header which means that the context is what we need to focus on. Remember that in XSS, context is very important as it defines the characters that are potentially dangerous. In the HTML context, characters like < and > are very dangerous. In Header Injection the greatest concern is over the carriage return (%0D or \r) and new line (%0A or \n) characters, or CRLF. Response headers are separated by CRLF, indicating that if you can insert a CRLF then you can start creating your own headers or even page content.

Manipulating the headers may allow you to redirect the user to a different page, perform cross-site scripting attacks, or even rewrite the page. While commonly overlooked, this is a very dangerous flaw.

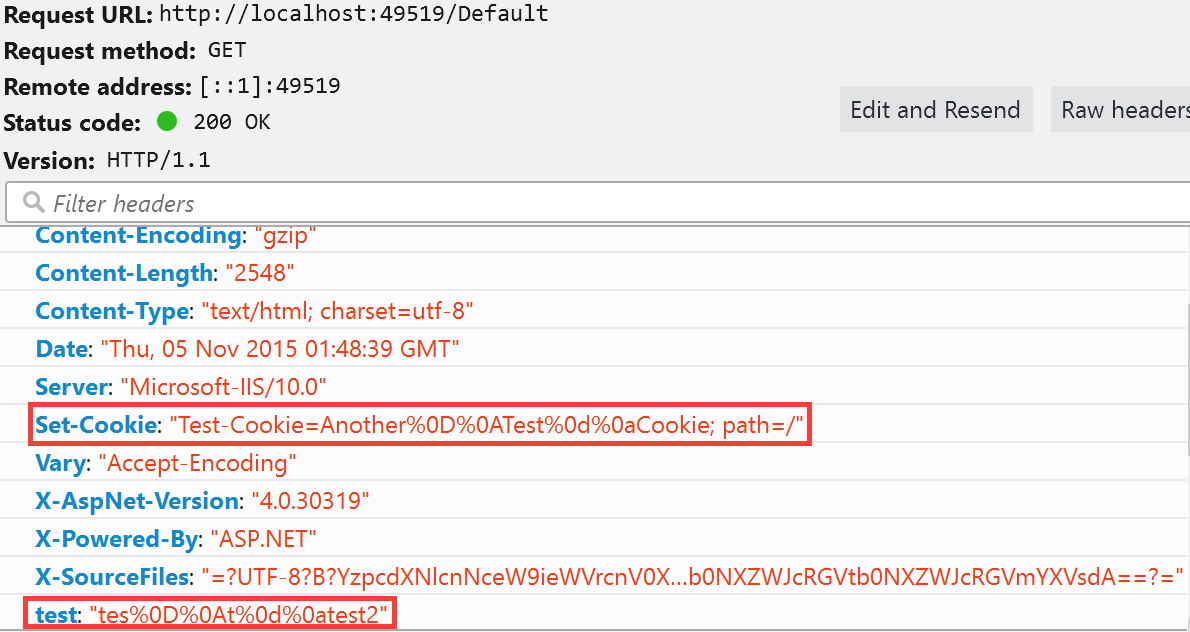

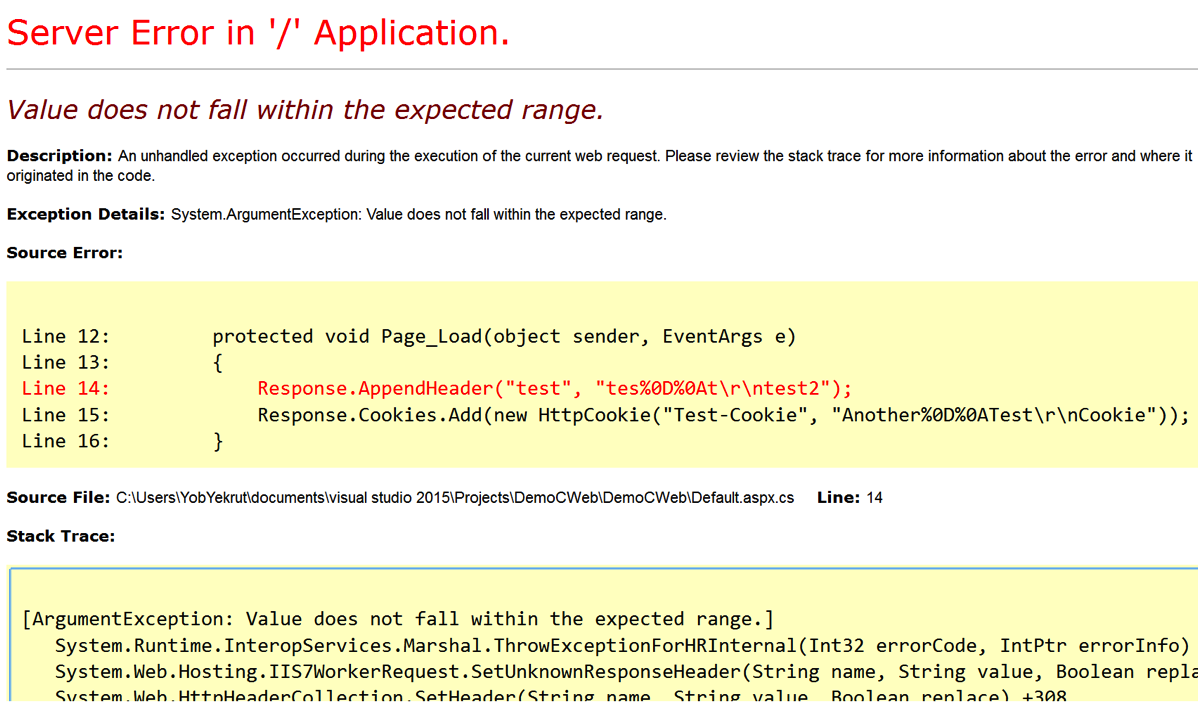

ASP.Net has a built in defense mechanism that is enabled by default called EnableHeaderChecking. When EnableHeaderChecking is enabled, CRLF characters are converted to %0D%0A and the browser does not recognize it as a new line. Instead, it is just displayed as characters, not actually creating a line break. The following code snippet was created to show how the response headers look when adding CRLF into a header.

public partial class _Default : Page

{

protected void Page_Load(object sender, EventArgs e)

{

Response.AppendHeader("test", "tes%0D%0At\r\ntest2");

Response.Cookies.Add(new HttpCookie("Test-Cookie", "Another%0D%0ATest\r\nCookie"));

}

}

When the application runs, it will properly encode the CRLF as shown in the image below.

My next step was to disable EnableHeaderChecking in the web.config file.

<httpRuntime targetFramework="4.6" enableHeaderChecking="false"/>

My expectation was that I would get a response that allowed the CRLF and would show me a line break in the response headers. To my surprise, I got the error message below:

So why the error? After doing a little Googling I found an article about ASP.Net 2.0 Breaking Changes on IIS 7.0. The item of interest is “13. IIS always rejects new lines in response headers (even if ASP.NET enableHeaderChecking is set to false)”

I didn’t realize this change had been implemented, but apparently, if using IIS 7.0 or above, ASP.Net won’t allow newline characters in a response header. This is actually good news as there is very little reason to allow new lines in a response header when if that was required, just create a new header. This is a great mitigation and with the default configuration helps protect ASP.Net applications from Response Splitting and HTTP Header Injection attacks.

Understanding the framework that you use and the server it runs on is critical in fully understanding your security risks. These built in features can really help protect an application from security risks. Happy and Secure coding!

I did a podcast on this topic which you can find on the DevelopSec podcast.

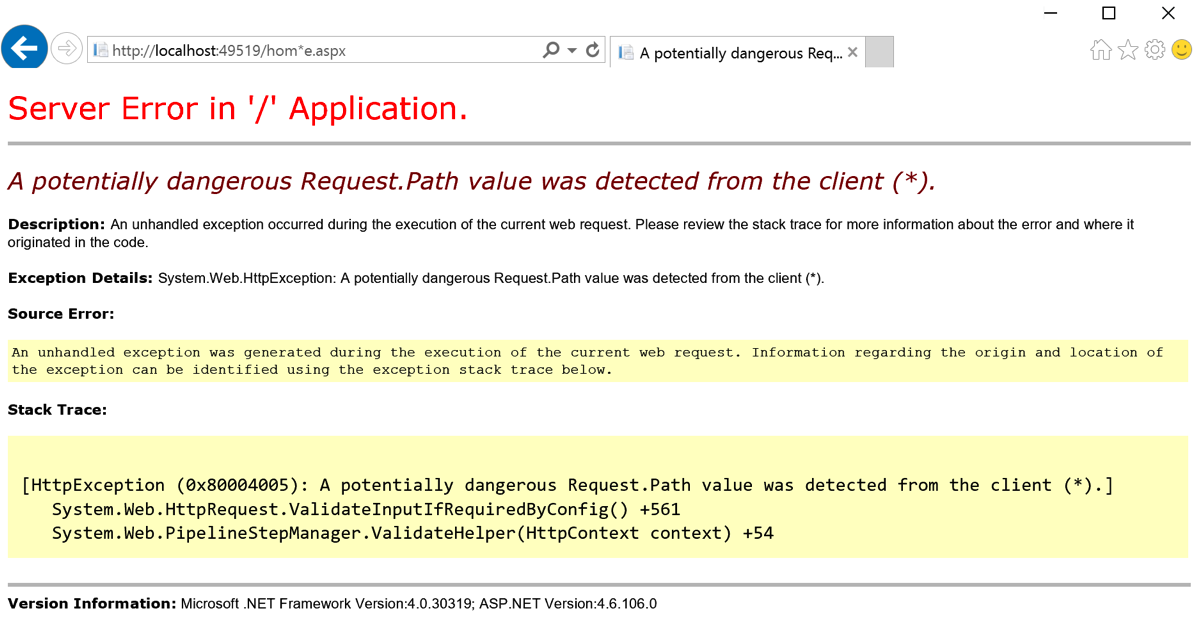

Potentially Dangerous Request.Path Value was Detected…

Filed under: Development, Security

I have discussed request validation many times when we see the potentially dangerous input error message when viewing a web page. Another interesting protection in ASP.Net is the built-in, on by default, Request.Path validation that occurs. Have you ever seen the error below when using or testing your application?

The screen above occurred because I placed the (*) character in the URL. In ASP.Net, there is a default set of defined illegal characters in the URL. This list is defined by RequestPathInvalidCharacters and can be configured in the web.config file. By default, the following characters are blocked:

- <

- >

- *

- %

- &

- :

- \\

It is important to note that these characters are blocked from being included in the URL, this does not include the protocol specification or the query string. That should be obvious since the query string uses the & character to separate parameters.

There are not many cases where your URL needs to use any of these default characters, but if there is a need to allow a specific character, you can override the default list. The override is done in the web.config file. The below snippet shows setting the new values (removing the < and > characters:

<httpruntime requestPathInvalidCharacters="*,%,&,:,\\"/>

Be aware that due the the web.config file being an xml file, you need to escape the < and > characters and set them as < and > respectively.

Remember that modifying the default security settings can expose your application to a greater security risk. Make sure you understand the risk of making these modifications before you perform them. It should be a rare occurrence to require a change to this default list. Understanding the platform is critical to understanding what is and is not being protected by default.

Securing The .Net Cookies

Filed under: Development, Security

I remember years ago when we talked about cookie poisoning, the act of modifying cookies to get the application to act differently. An example was the classic cookie used to indicate a user’s role in the system. Often times it would contain 1 for Admin or 2 for Manager, etc. Change the cookie value and all of a sudden you were the new admin on the block. You really don’t hear the phrase cookie poisoning anymore, I guess it was too dark.

There are still security risks around the cookies that we use in our application. I want to highlight 2 key attributes that help protect the cookies for your .Net application: Secure and httpOnly.

Secure Flag

The secure flag tells the browser that the cookie should only be sent to the server if the connection is using the HTTPS protocol. Ultimately this is indicating that the cookie must be sent over an encrypted channel, rather than over HTTP which is plain text.

HttpOnly Flag

The httpOnly flag tells the browser that the cookie should only be accessed to be sent to the server with a request, not by client-side scripts like JavaScript. This attribute helps protect the cookie from being stolen through cross-site scripting flaws.

Setting The Attributes

There are multiple ways to set these attributes of a cookie. Things get a little confusing when talking about session cookies or the forms authentication cookie, but I will cover that as I go. The easiest way to set these flags for all developer created cookies is through the web.config file. The following snippet shows the httpCookies element in the web.config.

<system.web>

<authentication mode="None" />

<compilation targetframework="4.6" debug="true" />

<httpruntime targetframework="4.6" />

<httpcookies httponlycookies="true" requiressl="true" />

</system.web>

As you can see, you can set httponlycookies to true to se the httpOnly flag on all of the cookies. In addition, the requiressl setting sets the secure flag on all of the cookies with a few exceptions.

Some Exceptions

I stated earlier there are a few exceptions to the cookie configuration. The first I will discuss is the session cookie. The session cookie in ASP.Net is defaulted/hard-coded to set the httpOnly attribute. This should override any value set in the httpCookies element in the web.config. The session cookie does not default to requireSSL and setting that value in the httpCookies element as shown above should work just find for it.

The forms authentication cookie is another exception to the rules. Like the session cookie, it is hard-coded to httpOnly. The Forms element of the web.config has a requireSSL attribute that will override what is found in the httpCookies element. Simply put, if you don’t set requiressl=’true’ in the Forms element then the cookie will not have the secure flag even if requiressl=’true’ in the httpCookies element.

This is actually a good thing, even though it might not seem so yet. Here is the next thing about that Forms requireSSL setting.. When you set it, it will require that the web server is using a secure connection. Seems like common sense, but imagine a web farm where the load balancers offload SSL. In this case, while your web app uses HTTPS from client to server, in reality, the HTTPS stops at the load balancer and is then HTTP to the web server. This will throw an exception in your application.

I am not sure why Microsoft decided to make the decision to actually check this value, since the secure flag is a direction for the browser not the server. If you are in this situation you can still set the secure flag, you just need to do it a little differently. One option is to use your load balancer to set the flag when it sends any responses. Not all devices may support this so check with your vendor. The other option is to programmatically set the flag right before the response is sent to the user. The basic process is to find the cookie and just sent the .Secure property to ‘True’.

Final Thoughts

While there are other security concerns around cookies, I see the secure and httpOnly flag commonly misconfigured. While it does not seem like much, these flags go a long way to helping protect your application. ASP.Net has done some tricky configuration of how this works depending on the cookie, so hopefully this helps sort some of it out. If you have questions, please don’t hesitate to contact me. I will be putting together something a little more formal to hopefully clear this up a bit more in the near future.

F5 BigIP Decode with Fiddler

Filed under: Development, Testing

There are many tools out there that allow you to decode the F5 BigIP cookie used on some sites. I haven’t seen anything that just plugs into Fiddler if you use that for debugging purposes. One of the reasons you may want to decode the F5 cookie is just that, debugging. If you need to know what server, behind the load balancer, your request is going to to troubleshoot a bug, this is the cookie you need. I won’t go into a long discussion of the F5 Cookie, but you can read more about it I have a description here.

Most of the examples I have seen are using python to do the conversion. I looked for a javascript example, as that is what Fiddler supports in its Custom Rules but couldn’t really find anything. I got to messing around with it and put together a very rough set of functions to be able to decode the cookie value back to its IP address and Port. It sticks the result into a custom column in the Fiddler interface (scroll all the way to the right, the last column). If it identifies the cookie it will attempt to decode it and populate the column. This can be done for both response and request cookies.

To decode response cookies, you need to update the custom Fiddler rules by adding code to the static function OnBeforeResponse(oSession: Session) method. The following code can be inserted at the end of the function:

var re = /\d+\.\d+\.0{4}/; // Simple regex to identify the BigIP pattern.

if (oSession.oResponse.headers)

{

for (var x:int = 0; x < oSession.oResponse.headers.Count(); x++)

{

if(oSession.oResponse.headers[x].Name.Contains("Set-Cookie")){

var cookie : Fiddler.HTTPHeaderItem = oSession.oResponse.headers[x];

var myArray = re.exec(cookie.Value);

if (myArray != null && myArray.length > 0)

{

for (var i = 0; i < myArray.length; i++)

{

var index = myArray[i].indexOf(".");

var val = myArray[i].substring(0,index);

var hIP = parseInt(val).toString(16);

if (hIP.length < 8)

{

var pads = "0";

hIP = pads + hIP;

}

var hIP1 = parseInt(hIP.toString().substring(6,8),16);

var hIP2 = parseInt(hIP.toString().substring(4,6),16);

var hIP3 = parseInt(hIP.toString().substring(2,4),16);

var hIP4 = parseInt(hIP.toString().substring(0,2),16);

var val2 = myArray[i].substring(index+1);

var index2 = val2.indexOf(".");

val2 = val2.substring(0,index2);

var hPort = parseInt(val2).toString(16);

if (hPort.length < 4)

{

var padh = "0";

hPort = padh + hPort;

}

var hPortS = hPort.toString().substring(2,4) + hPort.toString().substring(0,2);

var hPort1 = parseInt(hPortS,16);

oSession["ui-customcolumn"] += hIP1 + "." + hIP2 + "." + hIP3 + "." + hIP4 + ":" + hPort1 + " ";

}

}

}

}

}

In order to decode the cookie from a request, you need to add the following code to the static function OnBeforeRequest(oSession: Session) method.

var re = /\d+\.\d+\.0{4}/; // Simple regex to identify the BigIP pattern.

oSession["ui-customcolumn"] = "";

if (oSession.oRequest.headers.Exists("Cookie"))

{

var cookie = oSession.oRequest["Cookie"];

var myArray = re.exec(cookie);

if (myArray != null && myArray.length > 0)

{

for (var i = 0; i < myArray.length; i++)

{

var index = myArray[i].indexOf(".");

var val = myArray[i].substring(0,index);

var hIP = parseInt(val).toString(16);

if (hIP.length < 8)

{

var pads = "0";

hIP = pads + hIP;

}

var hIP1 = parseInt(hIP.toString().substring(6,8),16);

var hIP2 = parseInt(hIP.toString().substring(4,6),16);

var hIP3 = parseInt(hIP.toString().substring(2,4),16);

var hIP4 = parseInt(hIP.toString().substring(0,2),16);

var val2 = myArray[i].substring(index+1);

var index2 = val2.indexOf(".");

val2 = val2.substring(0,index2);

var hPort = parseInt(val2).toString(16);

if (hPort.length < 4)

{

var padh = "0";

hPort = padh + hPort;

}

var hPortS = hPort.toString().substring(2,4) + hPort.toString().substring(0,2);

var hPort1 = parseInt(hPortS,16);

oSession["ui-customcolumn"] += hIP1 + "." + hIP2 + "." + hIP3 + "." + hIP4 + ":" + hPort1 + " ";

}

}

}

Again, this is a rough compilation of code to perform the tasks. I am well aware there are other ways to do this, but this did seem to work. USE AT YOUR OWN RISK. It is your responsibility to make sure any code you add or use is suitable for your needs. I am not liable for any issues from this code. From my testing, this worked to decode the cookie and didn't present any issues. This is not production code, but an example of how this task could be done.

Just add the code to the custom rules file and visit a site with a F5 cookie and it should decode the value.

Static Analysis: Analyzing the Options

Filed under: Development, Security, Testing

When it comes to automated testing for applications there are two main types: Dynamic and Static.

- Dynamic scanning is where the scanner is analyzing the application in a running state. This method doesn’t have access to the source code or the binary itself, but is able to see how things function during runtime.

- Static analysis is where the scanner is looking at the source code or the binary output of the application. While this type of analysis doesn’t see the code as it is running, it has the ability to trace how data flows the the application down to the function level.

An important component to any secure development workflow, dynamic scanning analyzes a system as it is running. Before the application is running the focus is shifted to the source code which is where static analysis fits in. At this state it is possible to identify many common vulnerabilities while integrating into your build processes.

If you are thinking about adding static analysis to your process there are a few things to think about. Keep in mind there is not just one factor that should be the decision maker. Budget, in-house experience, application type and other factors will combine to make the right decision.

Disclaimer: I don’t endorse any products I talk about here. I do have direct experience with the ones I mention and that is why they are mentioned. I prefer not to speak to those products I have never used.

Budget

I hate to list this first, but honestly it is a pretty big factor in your implementation of static analysis. The vast options that exist for static analysis range from FREE to VERY EXPENSIVE. It is good to have an idea of what type of budget you have at hand to better understand what option may be right.

Free Tools

There are a few free tools out there that may work for your situation. Most of these tools depend on the programming language you use, unlike many of the commercial tools that support many of the common languages. For .Net developers, CAT.Net is the first static analysis tool that comes to mind. The downside is that it has not been updated in a long time. While it may still help a little, it will not compare to many of the commercial tools that are available.

In the Ruby world, I have used Brakeman which worked fairly well. You may find you have to do a little fiddling to get it up and running properly, but if you are a Ruby developer then this may be a simple task.

Managed Services or In-House

Can you manage a scanner in-house or is this something better delegated to a third party that specializes in the technology?

This can be a difficult question because it may involve many facets of your development environment. Choosing to host the solution in-house, like HP’s Fortify SCA may require a lot more internal knowledge than a managed solution. Do you have the resources available that know the product or that can learn it? Given the right resources, in-house tools can be very beneficial. One of the biggest roadblocks to in-house solutions is related to the cost. Most of them are very expensive. Here are a few in-house benefits:

- Ability to integrate directly into your Continuous Integration (CI) operations

- Ability to customize the technology for your environment/workflow

- Ability to create extensions to tune the results

Choosing to go with a managed solution works well for many companies. Whether it is because the development team is small, resources aren’t available or budget, using a 3rd party may be the right solution. There is always the question as to whether or not you are ok with sending your code to a 3rd party or not, but many are ok with this to get the solution they need. Many of the managed services have the additional benefit of reducing false positives in the results. This can be one of the most time consuming pieces of a static analysis tool, right there with getting it set up and configured properly. Some scans may return upwards of 10’s of thousands of results. Weeding through all of those can be very time consuming and have a negative effect on the poor person stuck doing it. Having a company manage that portion can be very beneficial and cost effective.

Conclusion

Picking the right static analysis solution is important, but can be difficult. Take the time to determine what your end goal is when implementing static analysis. Are you looking for something that is good, but not customizable to your environment, or something that is highly extensible and integrated closely with your workflow? Unfortunately, sometimes our budget may limit what we can do, but we have to start someplace. Take the time to talk to other people that have used the solutions you are looking at. Has their experience been good? What did/do they like? What don’t they like? Remember that static analysis is not the complete solution, but rather a component of a solution. Dropping this into your workflow won’t make you secure, but it will help decrease the attack surface area if implemented properly.

A Pen Test is Coming!!

Filed under: Development, Security, Testing

You have been working hard to create the greatest app in the world. Ok, so maybe it is just a simple business application, but it is still important to you. You have put countless hours of hard work into creating this master piece. It looks awesome, and does everything that the business has asked for. Then you get the email from security: Your application will undergo a penetration test in two weeks. Your heart skips a beat and sinks a little as you recall everything you have heard about this experience. Most likely, your immediate action is to go on the defensive. Why would your application need a penetration test? Of course it is secure, we do use HTTPS. No one would attack us, we are small. Take a breath.. it is going to be alright.

All too often, when I go into a penetration test, the developers start on the defensive. They don’t really understand why these ‘other’ people have to come in and test their application. I understand the concerns. History has shown that many of these engagements are truly considered adversarial. The testers jump for joy when they find a security flaw. They tell you how bad the application is and how simple the fix is, leading to you feeling about the size of an ant. This is often due to a lack of good communication skills.

Penetration testing is adversarial. It is an offensive assessment to find security weaknesses in your systems. This is an attempt to simulate an attacker against your system. Of course there are many differences, such as scope, timing and rules, but the goal is the same. Lets see what we can do on your system. Unfortunately, I find that many testers don’t have the communication skills to relay the information back to the business and developers in a way that is positive. I can’t tell you how may times I have heard people describe their job as great because they get to come in, tell you how bad you suck and then leave. If that is your penetration tester, find a new one. First, that attitude breaks down the communication with the client and doesn’t help promote a secure atmosphere. We don’t get anywhere by belittling the teams that have worked hard to create their application. Second, a penetration test should provide solid recommendations to the client on how they can work to resolve the issues identified. Just listing a bunch of flaws is fairly useless to a company.

These engagements should be worth everyone’s time. There should be positive communication between the developers and the testing team. Remember that many engagements are short lived so the more information you can provide the better the assessment you are going to get. The engagement should be helpful. With the right company, you will get a solid assessment and recommendations that you can do something with. If you don’t get that, time to start looking at another company for testing. Make sure you are ready for the test. If the engagement requires an environment to test in, have it all set up. That includes test data (if needed). The testers want to hit the ground running. If credentials are needed, make sure those are available too. The more help you can be, the more you will benefit from the experience.

As much as you don’t want to hear it, there is a very high chance the test will find vulnerabilities. While it would be great if applications didn’t have vulnerabilities, it is fairly rare to find them. Use this experience to learn and train on security issues. Take the feedback as constructive criticism, not someone attacking you. Trust me, you want the pen testers to find these flaws before a real attacker does.

Remember that this is for your benefit. We as developers also need to stay positive. The last thing you want to do is challenge the pen testers saying your app is not vulnerable. The teams that usually do that are the most vulnerable. Say positive and it will be a great learning experience.