Securing the Forms Authentication Cookie with Secure Flag

Filed under: Development, Security

One of the recommendations for securing cookies within a web application is to apply the Secure attribute. Typically, this is only a browser directive to direct the browser to only include the cookie with a request if it is over HTTPS. This helps prevent the cookie from being sent over an insecure connection, like HTTP. There is a special circumstance around the Microsoft .Net Forms Authentication cookie that I want to cover as it can become a difficult challenge.

The recommended way to set the secure flag on the forms authentication cookie is to set the requireSSL attribute in the web.config as shown below:

<authentication mode="Forms">

<forms name="Test.web" defaultUrl="~/Default" loginUrl="~/Login" path="/" requireSSL="true" protection="All" timeout="20" slidingExpiration="true"/>

</authentication>In the above code, you can see that requireSSL is set to true.

In your login code, you would have code similar to this once the user is properly validated:

FormsAuthentication.SetAuthCookie(userName, false);In this case, the application will automatically set the secure attribute.

Simple, right? In most cases this is very straight forward. Sometimes, it isn’t.

Removing HTTPS on Internal Networks

There are many occasions where the application may remove HTTPS on the internal network. This is commonly seen, or used to be commonly seen, when the application is behind a load balancer. The end user would connect using HTTPS over the internet to the load balancer and then the load balancer would connect to the application server over HTTP. One common reason for this was easier inspection of internal network traffic for monitoring by the organization.

Why Does This Matter? Isn’t the Secure Attribute of a Cookie Browser Only?

There was an interesting decision made by Microsoft when they created .Net Forms Authentication. A decision was made to verify that the connection to the application server is secure if the requireSSL attribute is set on the authentication cookie (specified above in the web.config example), when the cookie is set using FormsAuthentication.SetCookie(). This is the only cookie that the code checks to verify it is on a secure connection.

This means that if you set requireSSL in the web.config for the forms authentication cookie and the request to the server is over HTTP, .Net will throw an exception.

This code can be seen in https://github.com/microsoft/referencesource/blob/master/System.Web/Security/FormsAuthenticationModule.cs in the ExtractTicketFromCookie method:

The obvious solution is to enable HTTPS on the traffic all the way to the application server. But sometimes as developers, we don’t have that control.

What Can We Do?

There are two options I want to discus. This first one actually doesn’t provide a complete solution, even though it seems like it would.

FormsAuthentication.GetAuthCookie

At first glance, FormsAuthentication.GetAuthCookie might seem like a good option. Instead of using SetAuthCookie, which we know will break the application in this case, one might try to call GetAuthCookie and just manage the cookie themselves. Here is what that might look like, once the user is validated.

var cookie = FormsAuthentication.GetAuthCookie(userName, false);

cookie.Secure = true;

HttpContext.Current.Response.Cookies.Add(cookie);Rather than call SetAuthCookie, here we are trying to just get a new AuthCookie and we can set the secure flag and then add it to the cookies collection manually. This is similar to what the SetAuthCookie method does, with the exception that it is not setting the secure flag based on the web.config setting. That is the key difference here.

Keep in mind that GetAuthCookie returns a new auth ticket each time it is called. It does not return the ticket or cookie that was created if you previously called SetAuthCookie.

So why doesn’t this work completely?

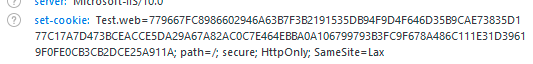

Upon initial testing, this method appears to work. If you run the application and log in, you will see that the cookie does have the secure flag set. This is just like our initial response at the beginning of this article. The issue here comes with SlidingExpiration. If you remember, in our web.config, we had SlidingExpiration set to true (the default is true, so it was set in the config just for visual aide).

<authentication mode="Forms">

<forms name="Test.web" defaultUrl="~/Default" loginUrl="~/Login" path="/" requireSSL="true" protection="All" timeout="20" slidingExpiration="true"/>

</authentication>Sliding expiration means that once the ticket is reached over 1/2 of its age and is submitted with a request, it will auto renew. This allows a user that is active to not have to re-authentication after the timeout. This re-authentication occurs again in https://github.com/microsoft/referencesource/blob/master/System.Web/Security/FormsAuthenticationModule.cs in the OnAuthenticate method.

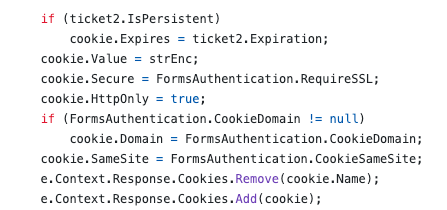

We can see in the above code that the secure flag is again being set by the FormsAuthentication.RequireSSL web.config setting. So even though we jumped through some hoops to set it manually during the initial authentication, every ticket renewal will reset it back to the config setting.

In our web.config we had a timeout set to 20 minutes. So requesting a page after 12 minutes will result in the application setting a new FormsAuth cookie without the Secure attribute.

This option would most likely get scanners and initial testers to believe the problem was remediated. However, after a potentially short period of time (depending on your timeout setting), the cookie becomes insecure again. I don’t recommend trying to set the secure flag in this manner.

Option 2 – Global.asax

Another option is to use the Global.asax Application_EndRequest event to set the cookie to secure. With this configuration, the web.config would not set requireSSL to true and the authentication would just use the FormsAuthentication.SetAuthCookie() method. Then, in the Global.asax file we could have something similar to the following code.

protected void Application_EndRequest(object sender, EventArgs e)

{

if(Response.Cookies.Count > 0)

{

for(int i = 0; i < Response.Cookies.Count; i++)

{

//Response.Cookies[i].Secure = true;

}

}

}The above code will loop through all the cookies setting the secure flag on them on every request. It is possible to go one step further and check the cookie name to see if it matches the forms authentication cookie before setting the secure flag, but hopefully your site runs on HTTPS so there should be no harm in all cookies having the secure flag set.

There have been debate on how well this method works based on when the EndRequest event will fire and if it fires for all requests or not. In my local testing, this method appears to work and the secure flag is set properly. However, that was local testing with minimal traffic. I cannot verify the effectiveness on a site with heavy loads or load balancing or other configurations. I recommend testing this out thoroughly before using it.

Conclusion

For something that should be so simple, this situation can be a headache for developers that don’t have any control over the connection to their application. In the end, the ideal situation would be to enable HTTPS to the application server so the requireSSL flag can be set in the web.config. If that is not possible, then there are some workarounds that will hopefully work. Make sure you are doing the appropriate testing to verify the workaround works 100% of the time and not just once or twice. Otherwise, you may just be checking a box to show an example of it being marked secure, when it is not in all cases.

Disabling SpellCheck on Sensitive Fields

Filed under: Development, Security

Do you know what happens when a browser performs spell checking on an input field?

Depending on the configuration of the browser, for example with the enhanced spell check feature of Chrome, it may be sending those values out to Google. This could potentially put sensitive data at risk so it may be a good idea to disable spell checking on those fields. Let’s see how we can do this.

Simple TextBox

<input type=“text” spellcheck=“false”>

Text Area

<textarea spellcheck=“false”></textarea>

You could also cover the entire form by setting it at the form level as shown below:

<form spellcheck=“false”>

Conclusion

It is important to point out that password fields can also be vulnerable to this if they have the “show password” option. In these cases, it is recommended to disable spell checking on the password field as well as other sensitive fields.

If the field might be sensitve, and doesn’t benefit from spellcheck, it might be a good idea to disable this feature.

Chrome is making some changes… Are you Ready?

Filed under: Development, Security

Last year, Chrome announced that it was making a change to default cookies to SameSite:Lax if there is no SameSite setting explicitly set. I wrote about this change last year (https://www.jardinesoftware.net/2019/10/28/samesite-by-default-in-2020/). This change could have an impact on some sites, so it is important that you test this out. The changes are supposed to start rolling out in February (this month). The linked post shows how to force these defaults in both FireFox and Chrome.

In addition to this, Chrome has announced that it is going to start blocking mixed-content downloads (https://blog.chromium.org/2020/02/protecting-users-from-insecure.html). In this case, they are starting in Chrome 83 (June 2020) with blocking executable file downloads (.exe, .apk) that are over HTTP but requested from an HTTPS site.

The issue at hand is that users are mislead into thinking the download is secure due to the requesting page indicating it is over HTTPS. There isn’t a way for them to clearly see that the request is insecure. The linked Chrome blog describes a timeline of how they will slowly block all mixed-content types.

For many sites this might not be a huge concern, but this is a good time to check your sites to determine if you have any type of mixed content and ways to mitigate this.

You can identify mixed content on your site by using the Javascript Console. It can be found under the Developer Tools in your browser. This will prompt a warning when it identifies mixed content. There may also be some scanners you can use that will crawl your site looking for mixed content.

To help mitigate this from a high level, you could implement CSP to upgrade insecure requests:

Content-Security-Policy: upgrade-insecure-requests

This can help by upgrading insecure requests, but it is not supported in all browsers. The following post goes into a lot of detail on mixed content and some ways to resolve it: https://developers.google.com/web/fundamentals/security/prevent-mixed-content/fixing-mixed-content

The increase in protections of the browsers can help reduce the overall threats, but always remember that it is the developer’s responsibility to implement the proper design and protections. Not all browsers are the same and you can’t rely on the browser to provide all the protections.

XXE DoS and .Net

External XML Entity (XXE) vulnerabilities can be more than just a risk of remote code execution (RCE), information leakage, or server side request forgery (SSRF). A denial of service (DoS) attack is commonly overlooked. However, given a mis-configured XML parser, it may be possible for an attacker to cause a denial of service attack and block your application’s resources. This would limit the ability for a user to access the expected application when needed.

In most cases, the parser can be configured to just ignore any entities, by disabling DTD parsing. As a matter of fact, many of the common parsers do this by default. If the DTD is not processed, then even the denial of service risk should be removed.

For this post, I want to talk about if DTDs are parsed and focus specifically on the denial of service aspect. One of the properties that becomes important when working with .Net and XML is the MaxCharactersFromEntities property.

The purpose of this property is to limit how long the value of an entity can be. This is important because often times in a DoS attempt, the use of expanding entities can cause a very large request with very few actual lines of data. The following is an example of what a DoS attack might look like in an entity.

<?xml version="1.0" encoding="UTF-8"?> <!DOCTYPE foo [ <!ELEMENT foo ANY > <!ENTITY dos 'dos' > <!ENTITY dos1 '&dos;&dos;&dos;&dos;&dos;&dos;&dos;&dos;&dos;&dos;&dos;&dos;' > <!ENTITY dos2 '&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;&dos1;' > <!ENTITY dos3 '&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;&dos2;' > <!ENTITY dos4 '&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;&dos3;' > <!ENTITY dos5 '&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;&dos4;' > <!ENTITY dos6 '&dos5;&dos5;&dos5;&dos5;&dos5;&dos5;&dos5;&dos5;&dos5;&dos5;&dos5;&dos5;' >]>

Notice in the above example we have multiple entities that each reference the previous one multiple times. This results in a very large string being created when dos6 is actually referenced in the XML code. This would probably not be large enough to actually cause a denial of service, but you can see how quickly this becomes a very large value.

To help protect the XML parser and the application the MaxCharactersFromEntities helps limit how large this expansion can get. Once it reaches the max amount, it will throw a System.XmlXmlException: ‘The input document has exceeded a limit set by MaxCharactersFromEntities’ exception.

The Microsoft documentation (linked above) states that the default value is 0. This means that it is undefined and there is no limit in place. Through my testing, it appears that this is true for ASP.Net Framework versions up to 4.5.1. In 4.5.2 and above, as well as .Net Core, the default value for this property is 10,000,000. This is most likely a small enough value to protect against denial of service with the XmlReader object.

XSS in Script Tag

Filed under: Development, Security, Testing

Cross-site scripting is a pretty common vulnerability, even with many of the new advances in UI frameworks. One of the first things we mention when discussing the vulnerability is to understand the context. Is it HTML, Attribute, JavaScript, etc.? This understanding helps us better understand the types of characters that can be used to expose the vulnerability.

In this post, I want to take a quick look at placing data within a <script> tag. In particular, I want to look at how embedded <script> tags are processed. Let’s use a simple web page as our example.

<html> <head> </head> <body> <script> var x = "<a href=test.html>test</a>"; </script> </body> </html>

The above example works as we expect. When you load the page, nothing is displayed. The link tag embedded in the variable is rated as a string, not parsed as a link tag. What happens, though, when we embed a <script> tag?

<html> <head> </head> <body> <script> var x = "<script>alert(9)</script>"; </script> </body> </html>

In the above snippet, actually nothing happens on the screen. Meaning that the alert box does not actually trigger. This often misleads people into thinking the code is not vulnerable to cross-site scripting. if the link tag is not processed, why would the script tag be. In many situations, the understanding is that we need to break out of the (“) delimiter to start writing our own JavaScript commands. For example, if I submitted a payload of (test”;alert(9);t = “). This type of payload would break out of the x variable and add new JavaScript commands. Of course, this doesn’t work if the (“) character is properly encoded to not allow breaking out.

Going back to our previous example, we may have overlooked something very simple. It wasn’t that the script wasn’t executing because it wasn’t being parsed. Instead, it wasn’t executing because our JavaScript was bad. Our issue was that we were attempting to open a <script> within a <script>. What if we modify our value to the following:

<html> <head> </head> <body> <script> var x = "</script><script>alert(9)</script>"; </script> </body> </html>

In the above code, we are first closing out the original <script> tag and then we are starting a new one. This removes the embedded nuance and when the page is loaded, the alert box will appear.

This technique works in many places where a user can control the text returned within the <script> element. Of course, the important remediation step is to make sure that data is properly encoded when returned to the browser. By default, Content Security Policy may not be an immediate solution since this situation would indicate that inline scripts are allowed. However, if you are limiting the use of inline scripts to ones with a registered nonce would help prevent this technique. This reference shows setting the nonce (https://developer.mozilla.org/en-US/docs/Web/HTTP/Headers/Content-Security-Policy/script-src).

When testing our applications, it is important to focus on the lack of output encoding and less on the ability to fully exploit a situation. Our secure coding standards should identify the types of encoding that should be applied to outputs. If the encodings are not properly implemented then we are citing a violation of our standards.

JavaScript in an HREF or SRC Attribute

Filed under: Development, Security, Testing

The anchor (<a>) HTML tag is commonly used to provide a clickable link for a user to navigate to another page. Did you know it is also possible to set the HREF attribute to execute JavaScript. A common technique is to use the onclick event of the anchor tab to execute a JavaScript method when the user clicks the link. However, to stop the browser from actually redirecting the HREF can be set to javascript:void(0);. This cancels the HREF functionality and allows the JavaScript from the onclick to execute as expected.

In the above example, notice that the HREF is set with a value starting with “javascript:”. This identifier tells the browser to execute the code following that prefix. For those that are security savvy, you might be thinking about cross-site scripting when you hear about executing JavaScript within the browser. For those of you that are new to security, cross-site scripting refers to the ability for an attacker to execute unintended JavaScript in the context of your application (https://www.owasp.org/index.php/Cross-site_Scripting_(XSS)).

I want to walk through a simple scenario of where this could be abused. In this scenario, the application will attempt to track the page the user came from to set up where the Cancel button will redirect to. Imagine you have a list page that allows you to view details of a specific item. When you click the item it takes you to that item page and passes a BackUrl in the query string. So the link may look like:

https://jardinesoftware.com/item.php?backUrl=/items.php

On the page, there is a hyperlink created that sets the HREF to the backUrl property, like below:

<a href=”<?php echo $_GET[“backUrl”];?>”>Back</a>

When the page executes as expected you should get an output like this:

<a href=”/items.php”>Back</a>

There is a big problem though. The application is not performing any type of output encoding to protect against cross-site scripting. If we instead pass in backUrl=”%20onclick=”alert(10); we will get the following output:

<a href=”” onclick=”alert(10);“>Back</a>

In the instance above, we have successfully inserted the onclick event by breaking out of the HREF attribute. The bold section identifies the malicious string we added. When this link is clicked it will prompt an alert box with the number 10.

To remedy this, we could (or typically) use output encoding to block the escape from the HREF attribute. For example, if we can escape the double quotes (” -> " then we cannot get out of the HREF attribute. We can do this (in PHP as an example) using htmlentities() like this:

<a href=”<?php echo htmlentities($_GET[“backUrl”],ENT_QUOTES);?>”>Back</a>

When the value is rendered the quotes will be escapes like the following:

<a href=”" onclick=&"alert(10);“>Back</a>

Notice in this example, the HREF actually has the entire input (in bold), rather than an onclick event actually being added. When the user clicks the link it will try to go to https://www.developsec.com/” onclick=”alert(10); rather than execute the JavaScript.

But Wait… JavaScript

It looks like we have solved the XSS problem, but there is a piece still missing. Remember at the beginning of the post how we mentioned the HREF supports the javascript: prefix? That will allow us to bypass the current encodings we have performed. This is because with using the javascript: prefix, we are not trying to break out of the HREF attribute. We don’t need to break out of the double quotes to create another attribute. This time we will set backUrl=javascript:alert(11); and we can see how it looks in the response:

<a href=”javascript:alert(11);“>Back</a>

When the user clicks on the link, the alert will trigger and display on the page. We have successfully bypassed the XSS protection initially put in place.

Mitigating the Issue

There are a few steps we can take to mitigate this issue. Each has its pros and many can be used in conjunction with each other. Pick the options that work best for your environment.

- URL Encoding – Since the HREF is meant to be a URL, you could perform URL encoding. URL encoding will render the javascript benign in the above instances because the colon (:) will get encoded. You should be using URL encoding for URLs anyway, right?

- Implement Content Security Policy (CSP) – CSP can help limit the ability for inline scripts to be executed. In this case, it is an inline script so something as simple as ‘Content-Security-Policy:default-src ‘self’ could be sufficient. Of course, implementing CSP requires research and great care to get it right for your application.

- Validate the URL – It is a good idea to validate that the URL used is well formed and pointing to a relative path. If the system is unable to parse the URL then it should not be used and a default back URL can be substituted.

- URL White Listing – Creating a white list of valid URLs for the back link can be effective at limiting what input is used by the end user. This can cut down on the values that are actually returned blocking any malicious scripts.

- Remove javascript: – This really isn’t recommended as different encodings can make it difficult to effectively remove the string. The other techniques listed above are much more effective.

The above list is not exhaustive, but does give an idea of ways to help reduce the risk of JavaScript within the HREF attribute of a hyper link.

Iframe SRC

It is important to note that this situation also applies to the IFRAME SRC attribute. it is possible to set the SRC of an IFRAME using the javascript: notation. In doing so, the javascript executes when the page is loaded.

Wrap Up

When developing applications, make sure you take this use case into consideration if you are taking URLs from user supplied input and setting that in an anchor tag or IFrame SRC.

If you are responsible for testing applications, take note when you identify URLs in the parameters. Investigate where that data is used. If you see it is used in an anchor tag, look to see if it is possible to insert JavaScript in this manner.

For those performing static analysis or code review, look for areas where the HREF or SRC attributes are set with untrusted data and make sure proper encoding has been applied. This is less of a concern if the base path of the URL has been hard-coded and the untrusted input only makes up parameters of the URL. These should still be properly encoded.

Security Tips for Copy/Paste of Code From the Internet

Filed under: Development, Security

Developing applications has long involved using code snippets found through textbooks or on the Internet. Rather than re-invent the wheel, it makes sense to identify existing code that helps solve a problem. It may also help speed up the development time.

Years ago, maybe 12, I remember a co-worker that had a SQL Injection vulnerability in his application. The culprit, code copied from someone else. At the time, I explained that once you copy code into your application it is now your responsibility.

Here, 12 years later, I still see this type of occurrence. Using code snippets directly from the web in the application. In many of these cases there may be some form of security weakness. How often do we, as developers, really analyze and understand all the details of the code that we copy?

Here are a few tips when working with external code brought into your application.

Understand what it does

If you were looking for code snippets, you should have a good idea of what the code will do. Better yet, you probably have an understanding of what you think that code will do. How vigorously do you inspect it to make sure that is all it does. Maybe the code performs the specific task you were set out to complete, but what happens if there are other functions you weren’t even looking for. This may not be as much a concern with very small snippets. However, with larger sections of code, it could coverup other functionality. This doesn’t mean that the functionality is intentionally malicious. But undocumented, unintended functionality may open up risk to the application.

Change any passwords or secrets

Depending on the code that you are searching, there may be secrets within it. For example, encryption routines are common for being grabbed off the Internet. To be complete, they contain hard-coded IVs and keys. These should be changed when imported into your projects to something unique. This could also be the case for code that has passwords or other hard-coded values that may provide access to the system.

As I was writing this, I noticed a post about the RadAsyncUpload control regarding the defaults within it. While this is not code copy/pasted from the Internet, it highlights the need to understand the default configurations and that some values should be changed to help provide better protections.

Look for potential vulnerabilities

In addition to the above concerns, the code may have vulnerabilities in it. Imagine a snippet of code used to select data from a SQL database. What if that code passed your tests of accurately pulling the queries, but uses inline SQL and is vulnerable to SQL Injection. The same could happen for code vulnerable to Cross-Site Scripting or not checking proper authorization.

We have to do a better job of performing code reviews on these external snippets, just as we should be doing it on our custom written internal code. Finding snippets of code that perform our needed functionality can be a huge benefit, but we can’t just assume it is production ready. If you are using this type of code, take the time to understand it and review it for potential issues. Don’t stop at just verifying the functionality. Take steps to vet the code just as you would any other code within your application.